If you haven’t already noticed, AI is already embedded in how we operate across biotech drug product development, shaping how we research, draft, compare, and communicate. The issue is less about adoption and more that we have not yet agreed on a shared language, approach or constraints. This gap creates real risk—for consultants and for the clients who rely on their output.

Across CMC, regulatory, clinical, and supply chain work, AI is being used quietly—in the shadows. Not because it’s prohibited, but because it’s undefined, ungoverned, and evolving faster than anyone’s policies can keep up.

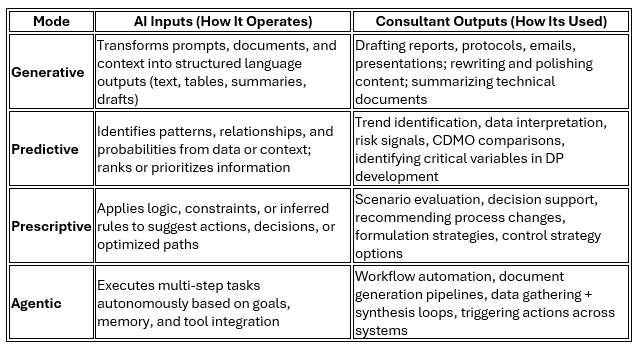

What we call “using AI” is also misleading. In an initial attempt to understand its use, we can define that AI operates across four modes on how it operates and how consultants can use it in practice:

Most consultants are operating in the Generative mode. Fewer are intentionally engaging the others. Fewer still are thinking about how these modes influence decisions for which they are accountable.

That distinction matters the moment work leaves your desktop. In DP development, outputs don’t stay theoretical—they inform formulation strategy, control strategy, process decisions, and regulatory positioning. At that point, the question is no longer whether AI was helpful. It’s whether the output is defensible, reproducible, and governed.

This is where a simple standard begins to emerge: the “Explainable Test.”

If you sign your name to the deliverable, you should be able to:

· Trace how it was developed

· Defend the reasoning behind it

· Reproduce it if challenged

Anything less introduces risk—especially in environments where decisions become batch records, deviations, or regulatory submissions.

The issue is not just technical—it’s economic and structural. Consultants see leverage: faster output, broader reach, increased productivity. Clients see exposure: IP risk, opaque processes, and uncertainty around how recommendations are formed. CDMOs and vendors sit downstream, where these decisions become operational reality. Without a free, open and honest discussion that leads to shared standards, each individual or entity will resolve this differently—ad hoc, and often inconsistently.

So here is the shift: the use of AI is not actually replacing consultants at the operational level. But when used, it redistributes where the effort and value sits. Drafting and summarization compress; Judgment, interpretation, and accountability expand. The consultant who understands that shift—and governs it—will lead the next phase of the profession and define what great consulting looks like.

Right now, we don’t have that governance. We don’t have a control strategy. We don’t even have a shared definition of acceptable use.

This is the conversation this series is intended to start.

Questions to Consider

· If a client asked you to reproduce how a deliverable was created, could you do it—step by step?

· Where is AI influencing your work today: drafting, interpretation, or decision-making?

· Should AI use be standardized across consulting—or remain consultant-specific?

For Discussion

· Does AI increase consultant value, compress it and ultimately increase productivity? How should this be priced in?

· Who owns the risk of AI-influenced decisions: consultant, client, or both?

· Should “explainability” become a professional requirement in regulated consulting work?

What Comes Next

Before we define standards for AI, we need to confront a more fundamental issue:

The consulting profession itself has never had a clear, enforceable definition.

AI didn’t create that ambiguity—but it has exposed it.

In the next article, that’s where we will go next.